1. The unfulfilled promises of next-gen tech

In certain circles, which I like to call “right-wing tech” (you might just call it corporate), new – proprietary – tech tools have become all the rage.

We have courses dedicated to no-code and to generative AI now. Good thing, probably, as those tools are part of the tech landscape now, and they’re probably not going to disappear tomorrow, so students should probably be aware of them.

The problem, IMHO, is that they’re served the marketing side of those tools :

- No-code helps you build apps and websites orders of magnitude faster

- You don’t need SQL anymore because now you have Airtable

- AI is going to make you orders of magnitude faster at writing code!

- You don’t need a developer anymore to do all those basic things!

It’s even a bit paradoxical, right? We’re both teaching code to students, and trying to show them it’s useless because we can do no-code now. I’m sure quite a few students of mine are currently wondering what the f*ck this is all about.

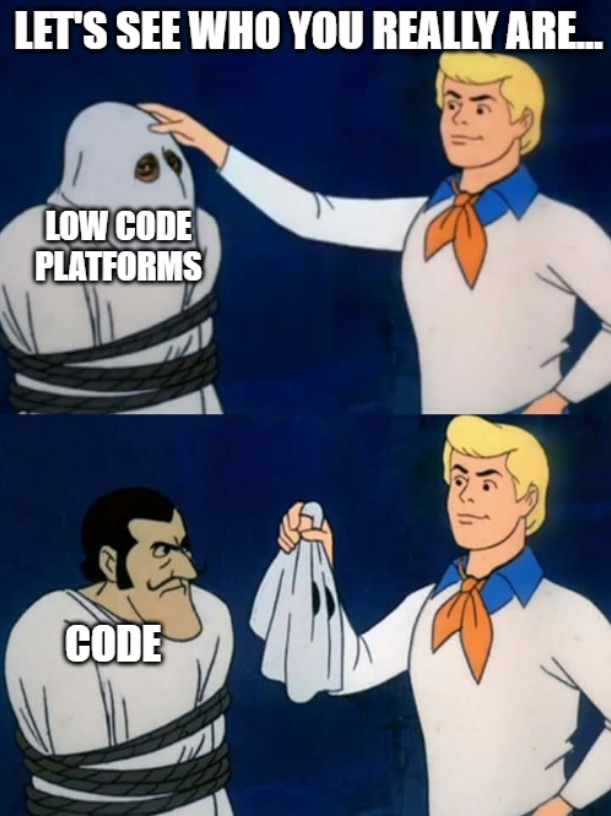

But there’s a reason we are teaching no-code tools to future developers, right? And the reason is : those tools hide complexity but do not solve it. As time passes, they become more and more complex themselves, requiring you to study them to be able to use them effectively. And that’s far, far away from the original promise that “you don’t need to learn code anymore”. Because you’re not learning code per se, but you’re learning something awfully similar, that you can’t use anywhere else than in those walled gardens.

2. The walled gardens of proprietary platforms

All the tools we hear of – webflow, airtable, zappier, Softr, Glide, Flutterflow, even Firebase – share one common trait: they’re freemium, proprietary tools.

You start using them for free out of curiosity, on pretty simple use-cases, discover how powerful and simple they are compared to the alternative (HTML, JS, SQL, PHP, C#…), fall into their trap and start using them for real world apps.

And there, reality catches up :

- You start paying way more than the equivalent in developer pay for a custom “classic” in-house app, (laid out in monthly payments, but still) in proportion to the number of users, the volume of data storage and transfer, the number of run automations, etc.

- Problems arise that you cannot solve simply because that layer has been hidden from you: performance issues, weird behaviours, etc.

- If you want to solve them, you need to hire an expert : namely, a “no-code developer”. Somebody who has studied that particular tool at a professional level and can help you do the inherently hard things necessary to make it right. There are Wordpress and Shopify experts now, when the premise was “make a website/online shop without being an expert”

- Sometimes, you realize the tools are just not fit for your use case after you’ve spent time and energy creating it and need to start over from scratch with regular tech stacks because of course you can’t just walk away with what you’ve done and export it to another tool. The company you’re buying this simplification from has no interest in helping you migrate elsewhere.

All in all, you’ve spent time and energy making a prototype when the promise was “an entire, fully functional app”. Real-world use cases are always a lot more complex than what’s on paper, and that complexity can only be “hidden away” from you. Not removed, not reduced. Hidden.

What is complex remains complex no matter who you pay to handle the complexity. But you can either pay someone who’s there to help and who will charge you for production and a flat fee for maintenance, or a nebulous platform which is not going to help in any way, is going to generate hidden problems automatically, does everything it can to prevent you from migrating away, and is going to charge you monthly and forever in proportion to your user base. Your choice, really! 😅

3. Everybody does it, so it’s better, right?

We’ve seen that before, with the rise of the Cloud(™️). At some point, a large portion of the industry had an interest in making you think you couldn’t handle infrastructure by yourself, and that everything was better off in the cloud.

Now? Most of our apps and data are stored and executed on servers belonging to Amazon, Google, and a few other actors, when a ~50€ Raspberry Pi at home could be enough for your use case, with optional data backup at a friend’s owning a similar setup; but we don’t teach that to students anymore, do we?

Don’t get me wrong, I’m not saying the cloud is always a trap and that it’s better to self-host everything, I’m saying that the cloud has become the de facto solution to everything and that we’re losing the skill to self host on inexpensive infrastructure in favour of blindly offering our apps and data to digital landlords when it might not be necessary.

4. Our tools cannot rival with our brains

AI is also not gonna reduce complexity. Generative AI, which is currently what we call “AI”, is trained on pre-existing stuff. It’s great to do “easy” boring stuff that people have been doing over and over again, but it won’t “solve problems” the way an engineer does, and it won’t come up with “outside the box” thinking to solve complex issues. It does the most probable thing, so it won’t be connecting far away dots. It’s not what it is designed to do.

Now, we’re starting to train students over five years of post-graduate studies to become “AI and no-code experts” (true story, the main school I’m working at is preparing this masters degree for next year). We’re seeing the rise of “prompt engineers” as a job title. Engineers. We’re just inventing new interfaces to dictate what to do to, but we consider we still need the same level of skill in order to do it. (except we don’t, not really, as I’ve said in a previous post, you’re not a car mechanic because you’ve learned how to drive a car, knowing how to use AI is not equivalent to knowing how to make AI, or even how to do what it does by yourself)

People who are saying that Artificial General Intelligence is almost there are straight out lying, they’re just trying to drive investment. Our brains have evolved to do what they do for billions of years. Through trial and error, sure, but can we really say that we’ve achieved a similar level of intellect in just a few decades? How arrogant would that be? We have seen a few very promising results, including weirdly flirty ChatGPT, but if ChatGPT is becoming better and better at complimenting tech bros, faking being your slutty girlfriend (note : search result from duckduckgo cause the actual websites require being over 18. You are warned), or writing essays with completely wrong hallucinated info, I don’t see it solving climate change quite yet.

Also, with more and more AI content out there, AI might have an upcoming “eating its own vomit” problem, that is not gonna solve itself. We’ll need AI experts, data scientists and a whole lot of people on board.

Complexity is here to stay. Tools are tools. Having a hammer will help you put nails in your board but won’t design the shelf. Right now, AI can design a shelf by mixing a lot of existing shelves, but if you want it to output something new there’s a good chance you’re gonna end up with either an other pre-existing shelf, a shelf that can’t handle the weight of a book, or most likely, both.

Don’t fall for the trap of “Complexity is gone! Now is the time for complex stuff with no skill!” It’s marketing. It’s just marketing.

So, students : learn how to code. Don’t let AI do your homework and pass the opportunity to practice and to learn. Learn free and open-source technologies. Don’t always grab the low-hanging fruit. Learn how to self-host. Learn how to make f*cking shelves. Try stuff. Fail, try again, invent, create, innovate. Give yourself the tools and skill to do all of that.